ApplyAdadelta

tensorflow C++ API

tensorflow::ops::ApplyAdadelta

Update ‘*var’ according to the adadelta scheme.

Summary

accum = rho() * accum + (1 - rho()) * grad.square(); update = (update_accum + epsilon).sqrt() * (accum + epsilon()).rsqrt() * grad; update_accum = rho() * update_accum + (1 - rho()) * update.square(); var -= update;

Arguments:

- scope: A Scope object

- var: Should be from a Variable().

- accum: Should be from a Variable().

- accum_update: Should be from a Variable().

- lr: Scaling factor. Must be a scalar.

- rho: Decay factor. Must be a scalar.

- epsilon: Constant factor. Must be a scalar.

- grad: The gradient.

Optional attributes (seeAttrs):

- use_locking: If True, updating of the var, accum and update_accum tensors will be protected by a lock; otherwise the behavior is undefined, but may exhibit less contention.

Returns:

Output: Same as “var”.

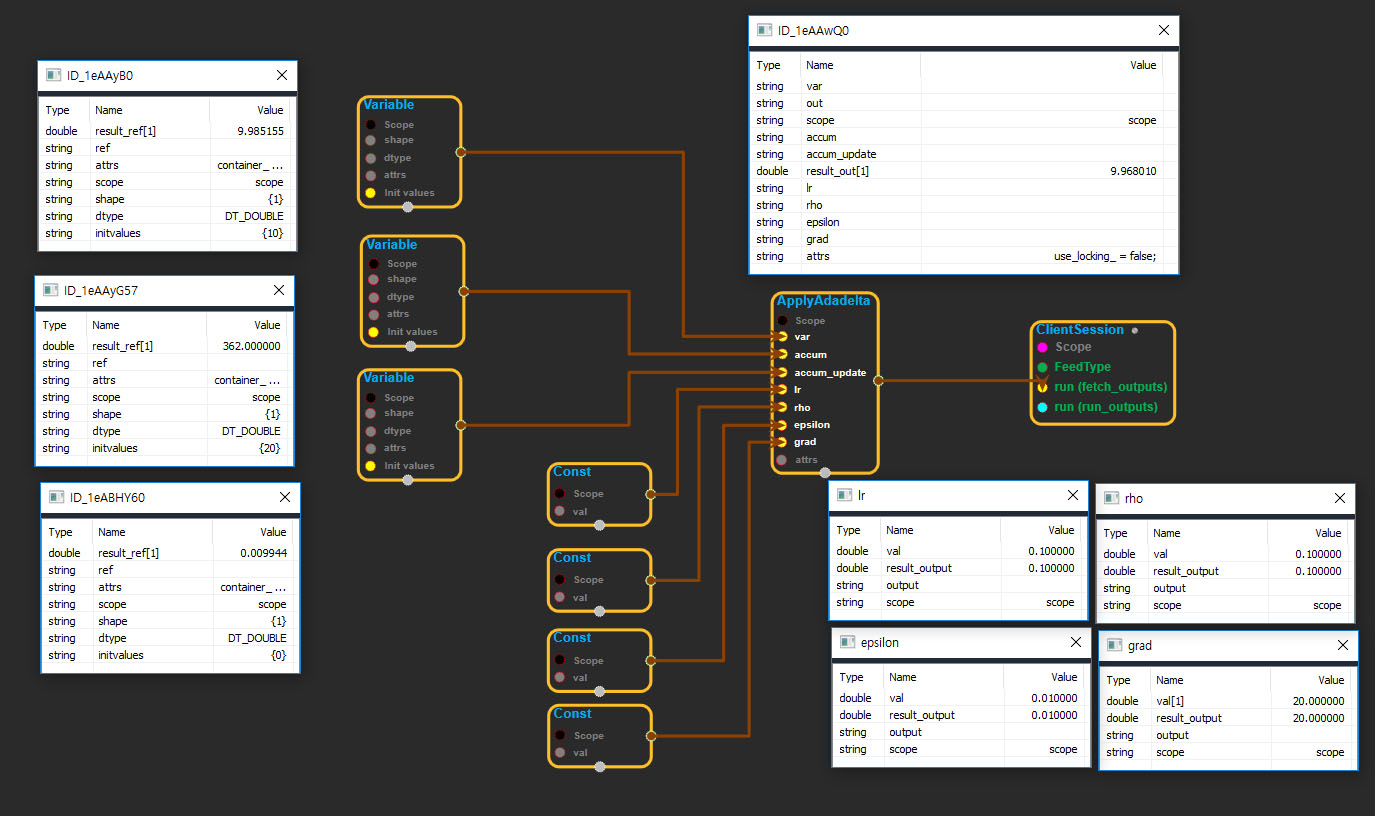

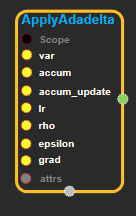

ApplyAdadelta block

Source link : https://github.com/EXPNUNI/enuSpaceTensorflow/blob/master/enuSpaceTensorflow/tf_training.cpp

Argument:

- Scope scope : A Scope object (A scope is generated automatically each page. A scope is not connected.)

- Input var: connect Input node.

- Input accum: connect Input node.

- Input accum_update: connect Input node.

- Input lr: connect Input node.

- Input rho: connect Input node.

- Input epsilon: connect Input node.

- Input grad: connect Input node.

- ApplyAdadelta ::Attrs attrs : Input attrs in value. ex) use_locking_ = false;

Return:

- Output output : Output object of ApplyAdadelta class object.

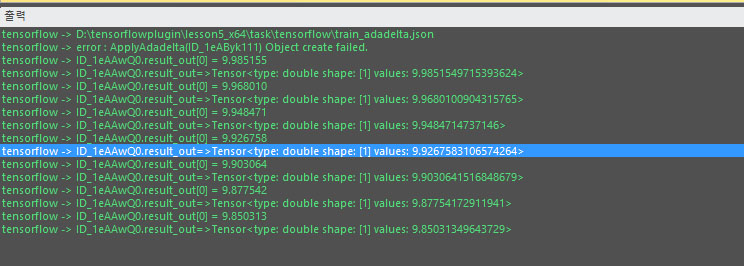

Result:

- std::vector(Tensor) result_output : Returned object of executed result by calling session.

Using Method